For years, keeping children off social media meant asking them to check a box. Norway has decided that era is over. On April 24, 2026, the Norwegian government formally announced plans to raise the country’s social media age limit from 13 to 16 and, more importantly, to shift the legal burden of enforcement from parents and children onto the tech platforms themselves. If a child under 16 is found on Instagram, TikTok, or Snapchat, it will no longer be the family’s problem. It will be Meta’s, TikTok’s, or Snap’s and they could face fines of up to NOK 20 million for failing to keep minors out.

Prime Minister Jonas Gahr Støre has called it an “uphill battle” against tech giants, but the political will behind the move is unusually strong. The reason is simple: Norway’s previous age limit of 13 was barely a speed bump. Surveys found that more than half of all nine year olds in the country were already active on social media proof, the government argues, that voluntary compliance and checkbox age gates have completely failed.

Why raising the age limit is only half the story

The headline number 16 is almost beside the point. The real shift in Norway’s proposed law is about who is responsible when that limit is broken. Under the current system, a child claiming to be 13 by ticking a box is enough to legally protect a platform. Under the proposed amendment to the Personal Data Act, that legal cover disappears entirely. Platforms will be required to actively verify that their users are old enough not just ask them.

To avoid creating another kind of unfairness, the law uses a clever cohort system rather than tracking individual birthdays. Access would be unlocked on January 1st of the year a child turns 16, ensuring that whole school year groups gain access together. No more situations where one student has Instagram and their best friend, born three months later, does not.

How would they actually verify age?

This is where the debate gets genuinely complex and where Norway’s proposal is being scrutinised most closely. Several methods are under discussion, each with its own trade offs.

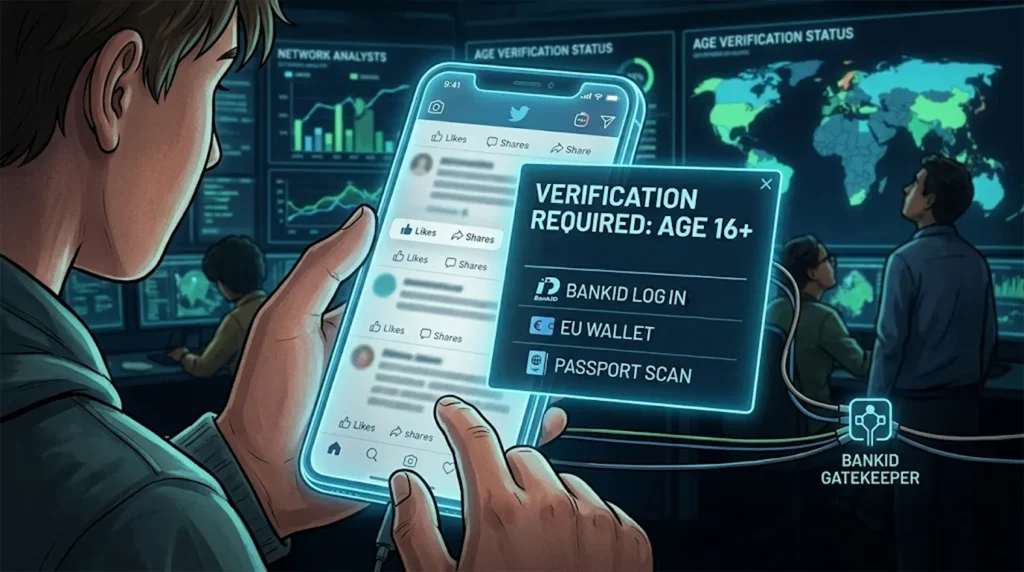

BankID integration is the frontrunner. Norway operates one of the world’s most advanced national digital identity systems, and children can obtain a BankID from as young as 12. Platforms could be required to use it as a login gateway but that raises immediate concerns about handing a national ID infrastructure over to private tech companies.

The EU Digital Identity Wallet, a new app announced by the European Commission on April 15, 2026, and of which Norway, as an EEA member, can take advantage offers a more privacy preserving alternative. It allows users to confirm they meet an age threshold without revealing their full name, address, or birthdate to the platform.

Some platforms may also turn to AI based age estimation, which uses a device’s camera to infer a person’s age from facial features. Norway’s data protection authority, Datatilsynet, has already expressed deep scepticism about this approach, warning that it introduces new biometric privacy risks in the process of solving an old problem.

Norway’s data protection authority has cautioned that forcing all users including adults to upload a passport or verify via BankID just to browse TikTok creates a massive “honeypot” of private data that platforms should never hold. The government’s response: the EU’s Digital Services Act (DSA) framework will provide the enforcement teeth to ensure that verification data is handled safely and not retained or monetised.

Big Tech’s response: public diplomacy, private resistance

The platforms have not taken the announcement quietly. Their response follows a familiar playbook acknowledge child safety concerns loudly while quietly pushing back on every enforcement mechanism that would cost them users.

Google and YouTube were first to issue a statement, arguing that they have spent over a decade building “age appropriate experiences” and that a blanket ban will push young people toward “darker, less regulated corners of the internet.” Meta and Snap have echoed that argument while also raising compliance objections, insisting that mandatory government ID checks at login violate the privacy of all their users, not just minors.

The platforms have also pointed to what happened in Australia, which enacted a similar under-16 ban in December 2025. Millions of accounts were deactivated but many minors simply returned using VPNs or secondary “ghost” accounts, raising genuine questions about whether hard bans change behaviour or merely displace it.

“Children are being taken over by algorithms.”— Prime Minister Jonas Gahr Støre, April 24, 2026

Behind the scenes, however, the platforms’ real concern is not Norway. With only 5.5 million people, Norway is a small market. What terrifies them is the regulatory domino effect. If Norway successfully forces BankID-style verification on Meta, France, Spain, and the entire European Union will demand the same. Expect legal challenges and slow walking of implementation as platforms wait to see how the EU Digital Identity Wallet and the Digital Services Act are finalised.

Adding an unlikely layer of intellectual cover, more than 370 technology scientists recently signed an open letter warning that mandatory ID checks “kill anonymity” for everyone online and create mass surveillance risks arguments the industry has eagerly amplified in parliamentary debates.

Norway is not alone, a global race to 16

What makes Norway’s move particularly significant is the company it is keeping. In the space of just a few months, a clear international consensus has formed around the age of 16 as a threshold for unrestricted social media access and around the idea that platforms, not parents, must be the enforcers.

Global age limits at a glance — April 2026

| Country | Age Limit | Status | Verification Method |

| Australia | 16 | Active | Biometrics / ID Estimation |

| Spain | 16 | Active | National Digital ID |

| Norway | 16 | Proposed | Likely BankID / EU Wallet |

| France | 15 | Pending | Government “Digital Pass” |

| Denmark | 15 | Planned | Age Verification App |

Australia was the pioneer, enacting the world’s strictest model in December 2025 with fines of up to AUD 50 million for platforms that fail to block minors. Spain followed in February 2026, becoming the first EU country to pass an under-16 ban. Prime Minister Pedro Sánchez memorably described social media as a “failed state” requiring strict policing. The UK is in the final stages of consultation, while France and Denmark are converging on a slightly lower threshold of 15, with France also banning smartphones in high schools from September 2026.

The European Union is trying to unify these diverging national rules through a single framework. The EU’s new Age Verification App, declared technically ready on April 15, 2026, is designed to let users prove their age without sharing their identity, a potential solution to the privacy paradox at the heart of all these bans.

The deeper shift: from consent to protection

There is something more fundamental happening beneath the policy debate. For years, the phrase “digital age of majority” referred narrowly to the age at which a child could consent to having their data processed without a parent’s signature. Today, it means something different and more ambitious: the age at which a person is considered psychologically resilient enough to navigate algorithmic feeds without state protection.

That is a significant philosophical shift, and one that Big Tech has not yet found a convincing answer to. Parental controls, content filters, and self regulatory pledges have all been tried and surveys like the one showing half of Norwegian nine year olds on social media suggest they have not worked. The question now is whether hard bans and mandatory ID verification will fare any better, or whether determined teenagers will simply find the workarounds that teenagers have always found.

Norway’s answer, at least for now, is that the responsibility for preventing that should sit with the platforms not with the children.